Store Prompts in code

Humanloop allows you to store Prompt and Agent definitions in your local filesystem and in version control, while still leveraging Humanloop’s prompt management and evaluation capabilities. This guide will walk you through using local Files in your development workflow.

Prerequisites

- A Humanloop account with at least one Prompt or Agent created

- Humanloop SDK installed (which includes the CLI functionality)

Example Prompt for this guide

To follow along with the examples in this guide, create a Prompt named welcome-email in the root directory of your Humanloop workspace.

We’ll create a prompt that generates a welcome email for new customers. The Prompt uses the Humanloop .prompt format which has two main parts:

- A configuration section (between --- marks) that specifies the model settings

- Message templates: a system message setting the context, and a user message with personalization variables

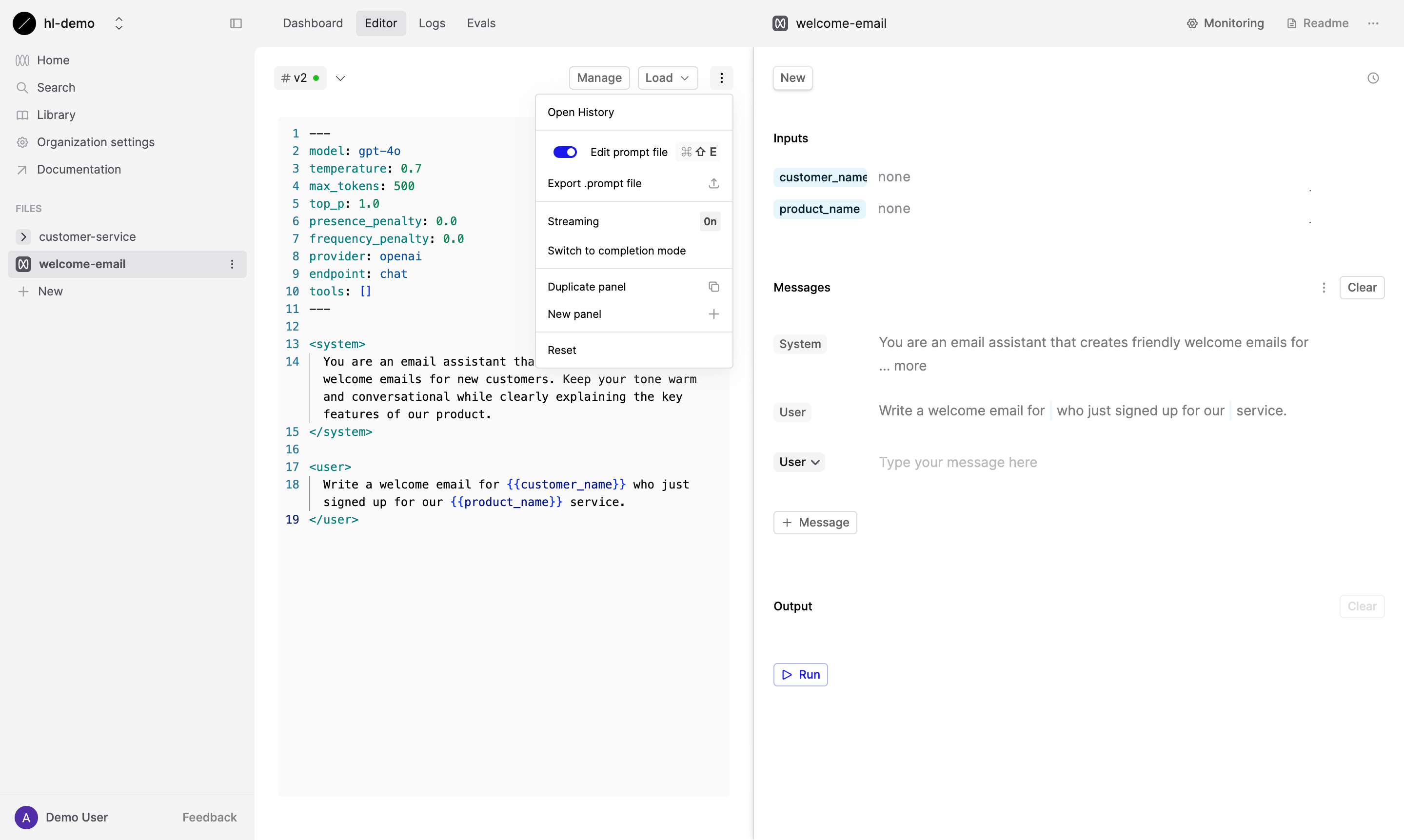

Toggle the Prompt File editor by hitting Cmd + Shift + E (or Ctrl + Shift + E on Windows) and replace the existing content with the following:

Save this new version of the Prompt by pressing Manage, then Deploy…, select your default environment (typically production) and follow the steps to deploy.

Installing the Humanloop SDK

The Humanloop SDK includes both the programming interface and CLI tools you’ll need for this guide.

You’ll also need your Humanloop API key, which you can find on the API Keys page in your Organization settings.

Step 1: Pull Files from Humanloop

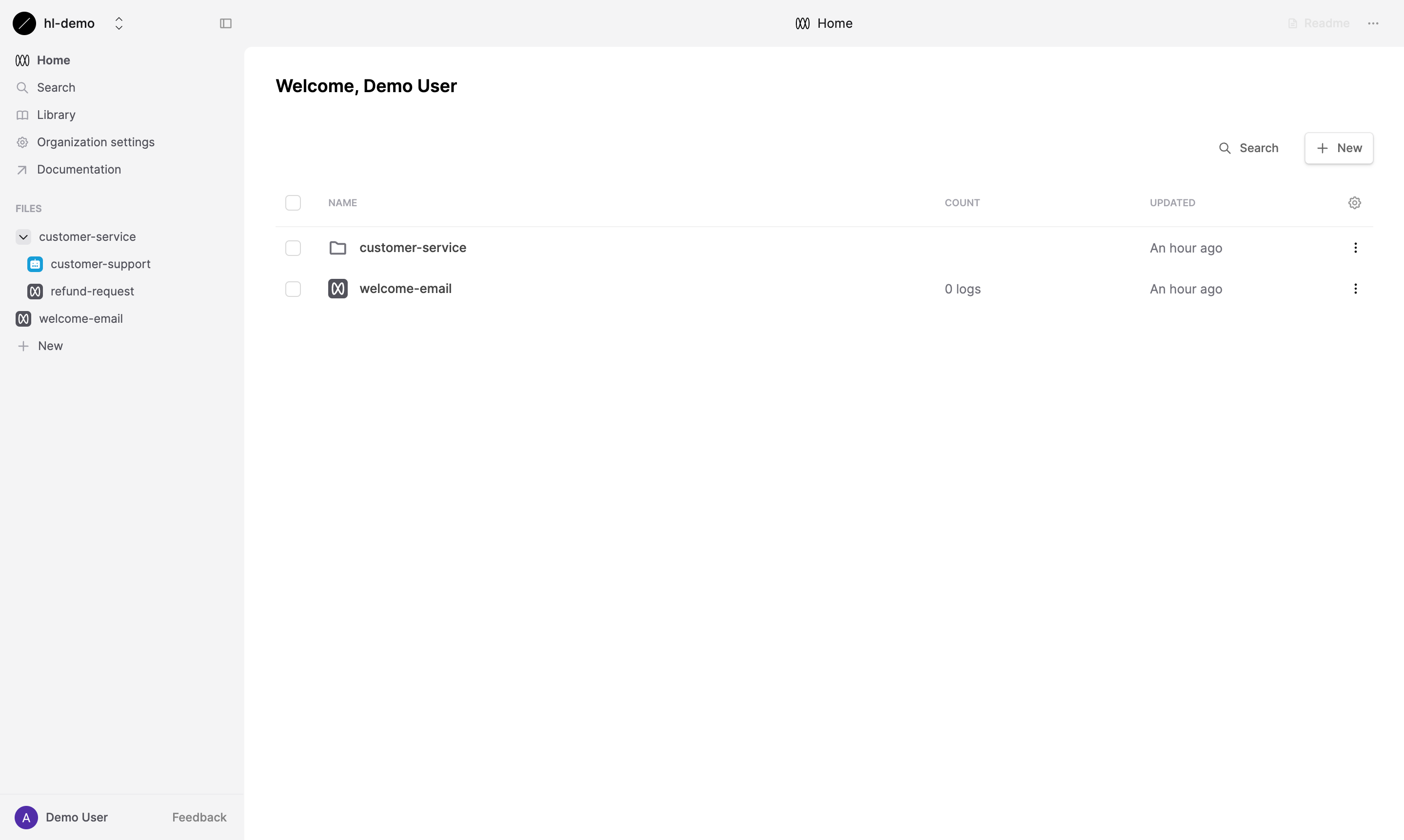

After creating your Prompt in Humanloop (like the example Prompt we set up earlier), you’ll see it in your workspace:

Now you can pull this Prompt from Humanloop to your local environment by running:

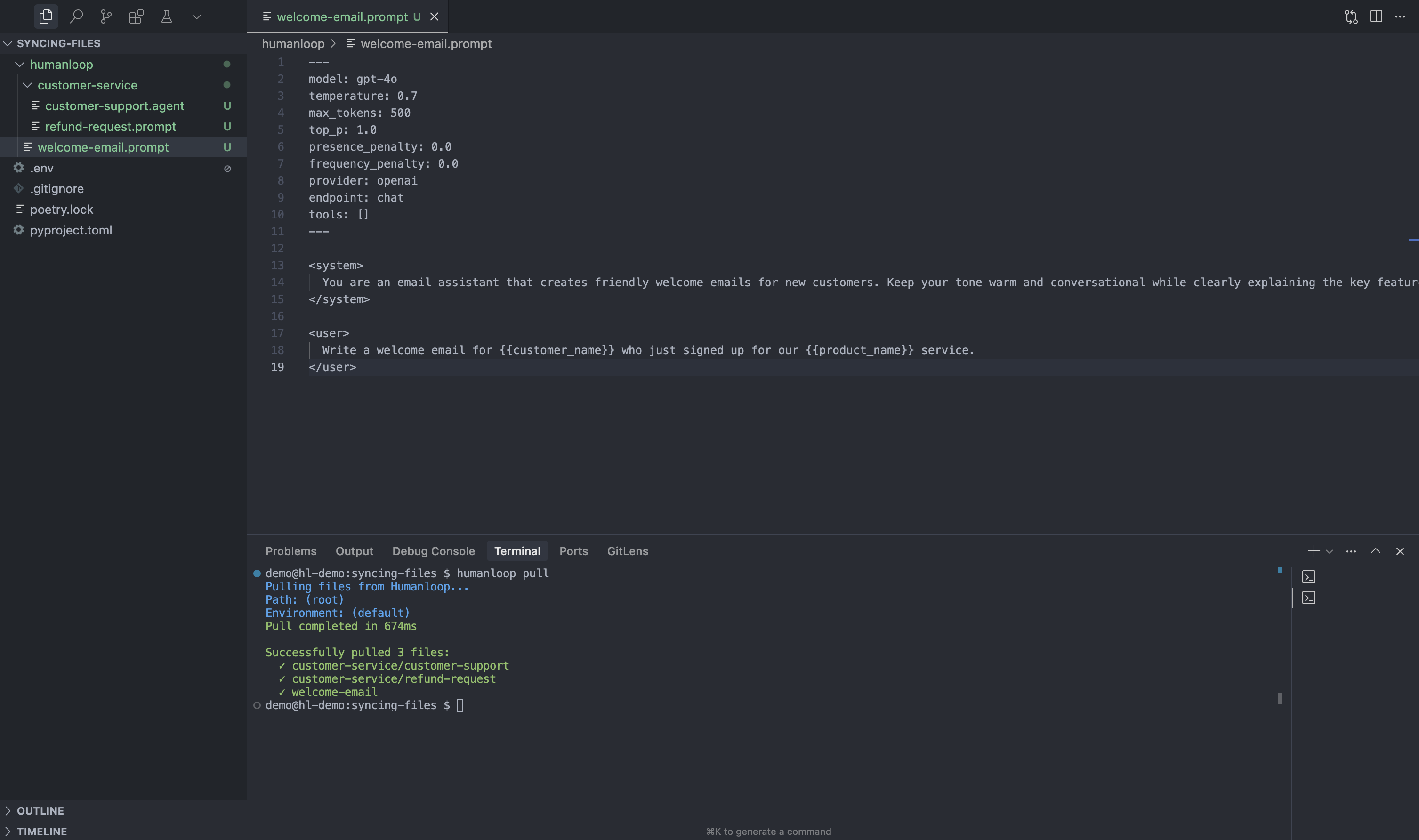

This command clones your Humanloop workspace into a humanloop directory in your project root,

maintaining the same folder structure as your remote workspace.

humanloop pull will pull all Files deployed to your default environment (typically production) and store them in the humanloop directory.

To pull only specific Files, use a different environment, or change the local destination directory, check out the options in the help menu:

Step 2: Use local Files in your code

Now that you have your Prompt locally, you can use it in your code by configuring the SDK to use local Files.

You can also log results from your own provider calls by referencing local Files:

Important: When local Files are enabled and you reference a File using its path, the SDK will:

- By default, look for Files in the

humanloopdirectory (this can be customized withlocal_files_directory/localFilesDirectory) - Only look for Files in your local directory (there is no fallback to remote workspace)

- Use the exact local definition, even if newer versions exist in your remote workspace

- Make API calls to Humanloop to execute Prompts and log results

Step 3: Verify local File usage

To verify that your code is actually using the local Prompt, you can make a small modification and observe the change in output.

- Open

humanloop/welcome-email.promptin a text editor and modify the system message.

- Call the modified Prompt from your code:

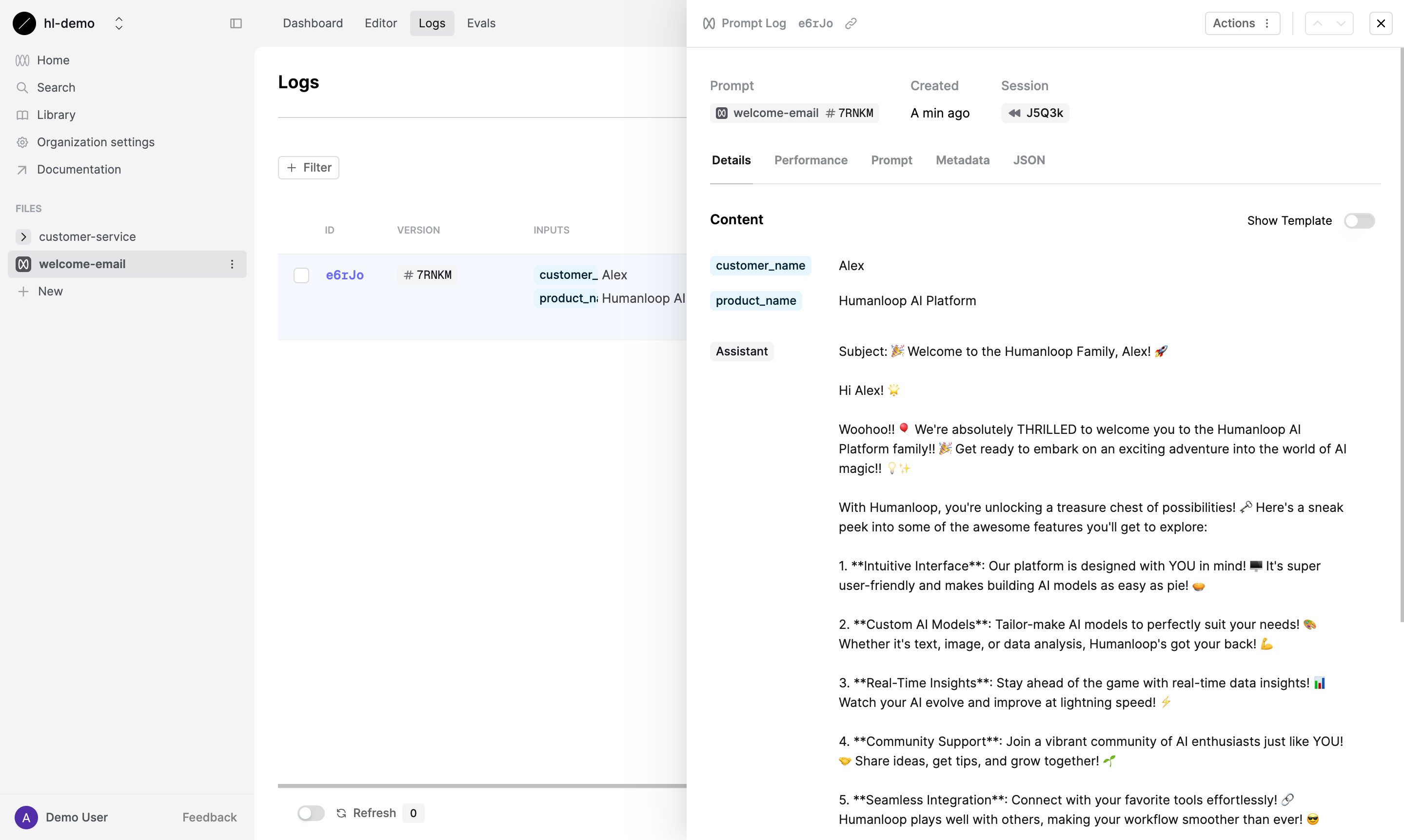

You’ll notice in the UI that a log was created with the new version of the Prompt.

When you call a modified local File, Humanloop uses content hashing to check for changes. If the content differs from an existing version, a new version is automatically created.

Step 4: Version control

Now that your Prompt is a local File, you can add it to your version control system.

Once your Files are committed, you can track changes from Humanloop too.

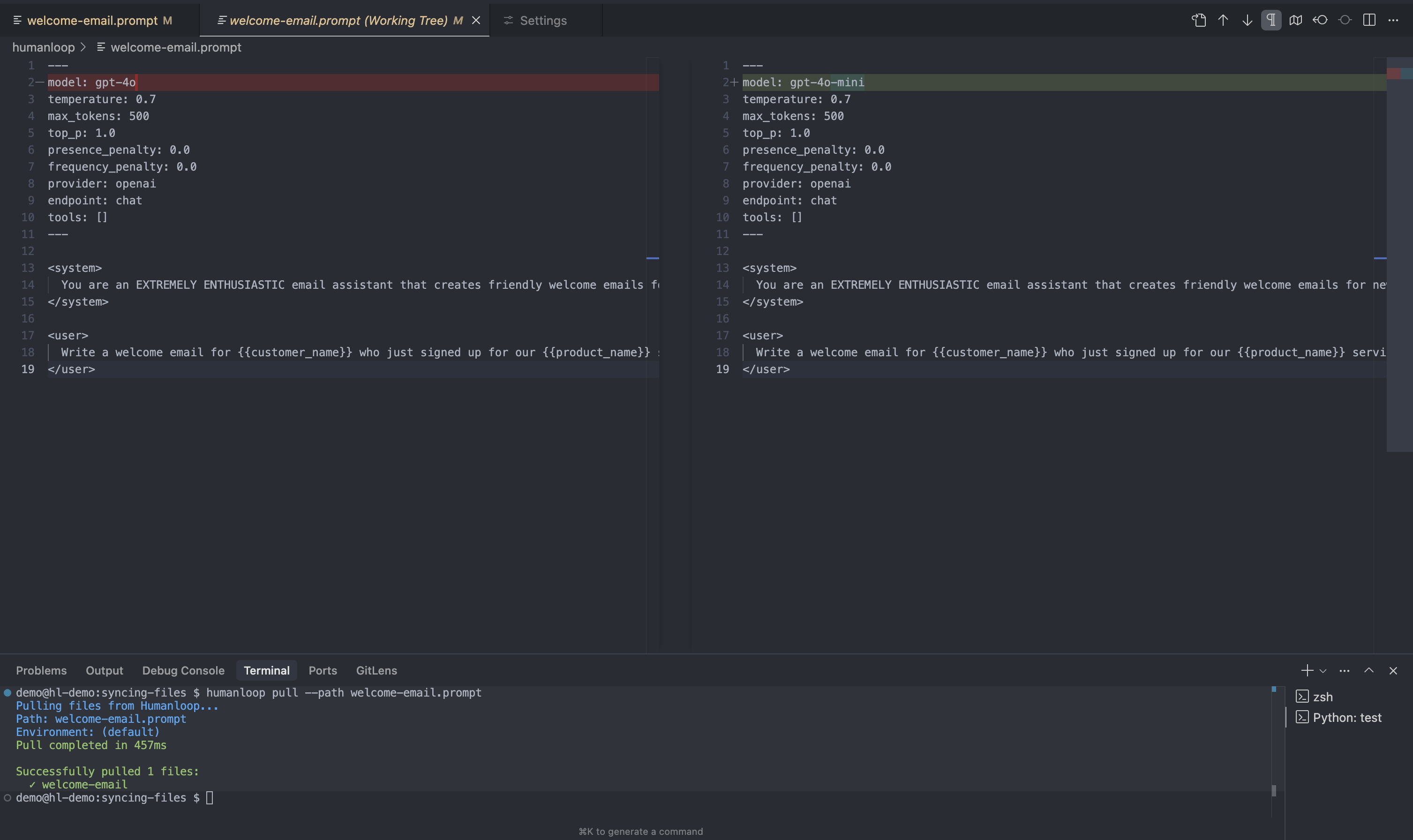

After modifying a Prompt in the Humanloop UI and deploying it, run humanloop pull to get the

latest version, then use git diff to see what changed.

For example, if you update the model in your welcome-email Prompt from gpt-4o to gpt-4o-mini

in the Humanloop UI and deploy it, after pulling the changes you would see:

By integrating your Prompts and Agents with version control, you create a more robust development workflow:

- Single source of truth for both code and AI components

- Change history with full audit trail of who changed what and when

- Local experimentation through editing Files directly in your IDE without needing the Humanloop UI

- Deployment safety via pull requests and code reviews for Prompt changes

Using with Agents

Everything you’ve learned about working with Prompt Files also applies to Agent Files.

The process is identical - pull from Humanloop, reference with the path parameter in agents.call and agents.log operations,

and manage through version control.

Troubleshooting

If you encounter any issues while working with local Files, check these common solutions:

- API key not detected? Ensure it’s stored in a

.envfile at the top level and that you run commands from your project root. - Changes not reflecting? Ensure you’ve deployed your Prompt in the Humanloop UI after making changes.

- SDK not finding files? Ensure the directory used when pulling matches the one in your SDK initialization.

(if you used

--local-files-directory custom-dirwhen pulling, specify the same path in your SDK config forlocal_files_directory/localFilesDirectory).

Next Steps

By integrating Humanloop Files into your development workflow, you’ve bridged the gap between AI development and software engineering practices, making it easier to build robust AI applications.

Now that you’re using local Files, you might want to:

- Set up continuous integration to automatically test your Prompts and Agents when they change

- Learn more about the File formats to understand how to manually edit them if needed

- Explore environment labels to manage different versions for development, staging, and production